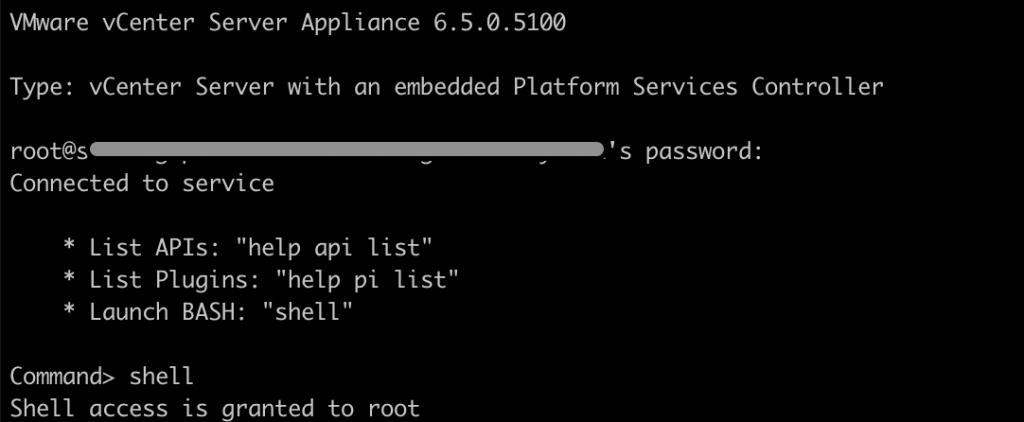

How to create an SSO user account using an SSH session on VCSA with help of dir-cli utility. It is possible through the dir-cli to create and manage SSO Users within the Platform Services Controller (PSC).

The dir-cli utility allows you to create and update solution users, create other user accounts, and manage certificates and passwords in vmdir. Use this utility together with vecs-cli and certool to manage your certificate infrastructure.

Pre-requesites:

SSH root access with the Administrator SSO password credentials to the VCSA appliance.

How to create new venter local account using CLI?

- Login to VCSA 6.5 as root on ssh session.

- You will get command prompt

3. Create a new user account on SSO using dir-cli.

root@gopal [ ~ ]# /usr/lib/vmware-vmafd/bin/dir-cli user create --account gopal --first-name gopal --last-name krishna --user-password ‘AllowMe@123'

Enter password for [email protected]:

User account [gopal] created successfully4. Add the user in group administrator or your custom group.

root@gopal [ ~ ]# /usr/lib/vmware-vmafd/bin/dir-cli group modify --name Administrators --add gopal

Enter password for [email protected]:

Account [gopal] added to group [Administrators]

Group member [gopal] added successfully5. List the administrators users.

root@gopal [ ~ ]# /usr/lib/vmware-vmafd/bin/dir-cli group list --name Administrators

Enter password for [email protected]:

cn=Administrator,cn=Users,dc=vsphere,dc=local

CN=machine-xxxxxx-18f4-4a97-bdf0-df9adc8f9458,CN=ServicePrincipals,DC=vsphere,DC=local

CN=vsphere-webclient-xxxxxx-18f4-4a97-bdf0-df9adc8f9458,CN=ServicePrincipals,DC=vsphere,DC=local

CN=gopal krishnan,cn=users,dc=vsphere,dc=local6. You are done now. Access the vSphere web client portal using the credentials.

There is another way to get the list of local user accounts in the vCenter Server Appliance.

You can see the list of the local user accounts so that you can decide which user account to manage from the appliance shell.

- Access the appliance shell and log in as a user who has a super administrator role.The default user with a super administrator role is root.

Command> localaccounts.user.list

Config:

1:

Username: root

Status: enabled

Role: superAdmin

Passwordstatus: valid

Fullname: root

Email: ''

Command>You can see a list of the local users. The information about a user includes the user name, status, role, status of the password, full name, and email.

Creating the appliance management account.

Command> localaccounts.user.add --role operator --username gopal --password

Enter password:

Reenter password:

Command>Command> localaccounts.user.list

Config:

1:

Username: root

Status: enabled

Role: superAdmin

Passwordstatus: valid

Fullname: root

Email: ''

2:

Username: gopal

Status: enabled

Role: operator

Passwordstatus: valid

Fullname: gopal krishnan

Email: '

root@gopal [ ~ ]#